When testing bandwidth, and troubleshooting bottlenecks, I prefer to use iperf. If you insist on testing bandwidth with FTP, it’s important NOT to use regular files. If you transfer actual files, the transfer could be limited by disk i/o, due to reads and writes. To eliminate this you can FTP from /dev/zero to /dev/null. It sounds super easy, but you have to use FTP in a special way to get it to read and write to special devices.

Here’s a little script. Be sure to replace the destination IP address, username and password with actual values:

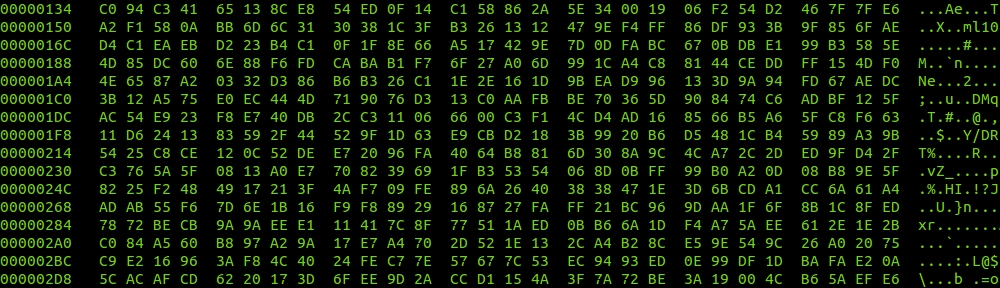

# cat ftp_dev_null.sh#!/bin/bash /usr/bin/ftp -n <IP address of machine> <<END verbose on user <usernanme> <password> bin put "|dd if=/dev/zero bs=32k" /dev/null bye END

# ftp_dev_null.sh

Verbose mode on.

331 Password required for fordodone

230 User fordodone logged in

Remote system type is UNIX.

Using binary mode to transfer files.

200 Type set to I

local: |dd if=/dev/zero bs=32k remote: /dev/null

200 PORT command successful

150 Opening BINARY mode data connection for /dev/null

^C

send aborted

waiting for remote to finish abort

129188+0 records in

129187+0 records out

4233199616 bytes (4.2 GB) copied, 145.851 s, 29.0 MB/s

226 Transfer complete

4233142272 bytes sent in 145.82 secs (28350.0 kB/s)

221 Goodbye.

#In this case I was getting around 230Mbits per second (over an IPSec tunnel) between my client and the FTP server. Not too bad.