Using find to act on files is very useful, but if the files that are found need different actions based on their filetype, it gets a bit trickier. For example there are some log files foo.log but after 10 days they get compressed to foo.log.gz. So you are finding regular text files, as well as gzipped text files. Extend your find with an -exec and a bash shell to determine what file extension it is, and to run the appropriate grep or zgrep based on that. Then run it through awk or whatever else to parse out what you need.

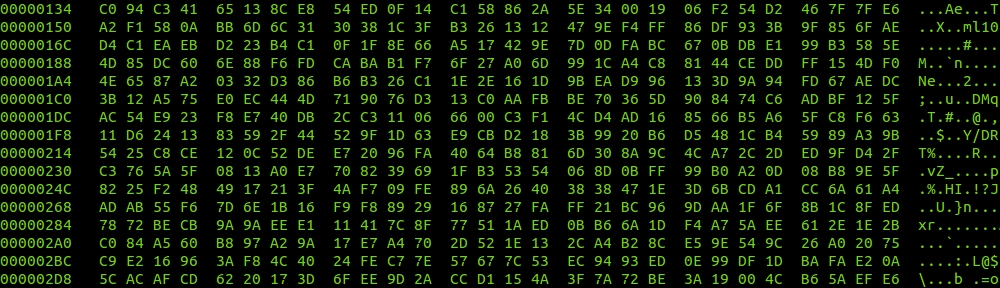

# find . -type f -name 'foo.log*' -exec bash -c 'if [[ $0 =~ .log$ ]]; then grep foobar $0; elif [[ $0 =~ .log.gz$ ]]; then zgrep foobar $0; fi' {} \; | awk '{if(/typea/)a++; if(/typeb/)b++; tot++} END {print "typea: "a" - "a*100/tot"%"; print "typeb: "b" - "b*100/tot"%"; print "typec: "tot-(a+b)" - "(tot-(a+b))*100/tot"%"; print "total: "tot;}'

typea: 5301 - 67.4771%

typeb: 2539 - 32.3192%

typec: 16 - 0.203666%

total: 7856