One pattern I see over and over again when looking at continuous delivery pipelines, is the use of an ssh client and a private key to connect to a remote ssh endpoint. Triggering scripts, restarting services, or moving files around could all be part of your deployment process. Keeping a private ssh key “secured” is critical to limiting authorized access to your resources accessible by ssh. Whether you use your own in-house application (read “unreliable mess of shell scripts”), Travis CI, Bitbucket Pipelines, or some other CD solution, you may find yourself wanting to store a ssh private key for use during deployment.

Bitbucket Pipelines already has a built-in way to store and provide ssh deploy keys, however, this is an example alternative to roll your own. The steps are pretty simple. We create an encrypted ssh private key, it’s corresponding public key, and a 64 character passphrase for the private key. The encrypted private key and public key get checked in to the repository, and the passphrase gets stored as a “secured” Bitbucket Pipelines variable. During build time, the private key gets decrypted into a file using the Bitbucket Pipelines passphrase variable. The ssh client can now use that key to connect to whatever resources you need it to.

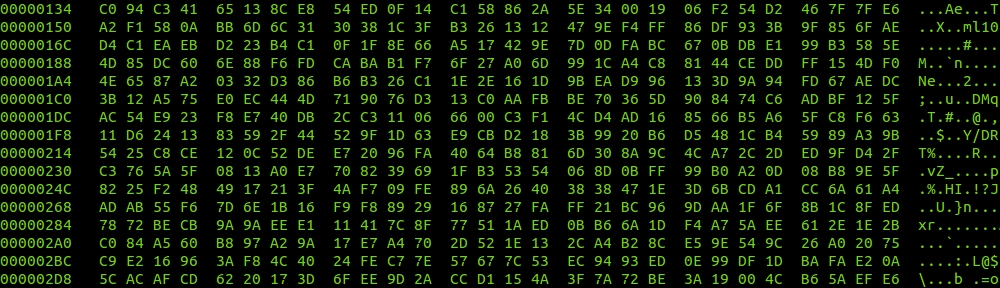

#!/bin/bash

set -e

if [ ! `which openssl` ] || [ ! `which ssh-keygen` ] || [ ! `which jq` ] || [ ! `which curl` ]

then

echo

echo "need ssh-keygen, openssl, jq, and curl to continue"

echo

exit

fi

# generate random string of 64 characters

echo

echo "generating random string for ssh deploy key passphrase..."

DEPLOY_KEY_PASSPHRASE=`< /dev/urandom LC_CTYPE=C tr -dc A-Za-z0-9#^%@ | head -c ${1:-64}`

# save passphrase in file to be used by openssl

echo

echo "saving passphrase for use with openssl..."

echo -n "${DEPLOY_KEY_PASSPHRASE}" >passphrase.txt

# generate encrypted ssh rsa key using passphrase

echo

echo "generating encrypted ssh private key with passphrase..."

openssl genrsa -out id_rsa_deploy.pem -passout file:passphrase.txt -aes256 4096

chmod 600 id_rsa_deploy.pem

# decrypt ssh rsa key using passphrase

echo

echo "decrypting ssh private key with passphrase to temp file..."

openssl rsa -in id_rsa_deploy.pem -out id_rsa_deploy.tmp -passin file:passphrase.txt

chmod 600 id_rsa_deploy.tmp

# generate public ssh key for use on target deployment server

echo

echo "generating public key from private key..."

ssh-keygen -y -f id_rsa_deploy.tmp > id_rsa_deploy.pub

# remove unencrypted ssh rsa key

echo

echo "removing unencrypted temp file..."

rm id_rsa_deploy.tmp

# ask user for bitbucket credentials

echo

echo "PUT IN YOUR BITBUCKET CREDENTIALS TO CREATE/UPDATE PIPLINES SSH KEY PASSPHRASE VARIABLE (password does not echo)"

echo

echo -n "enter bitbucket username: "

read BBUSER

echo -n "enter bitbucket password: "

read -s BBPASS

echo

# bitbucket API doesn't have "UPSERT" capability for creating(if not exists) or updating(if exists) variables

# get variable if exists

echo

echo "getting variable uuid if variable exists"

DEPLOY_KEY_PASSPHRASE_UUID=`curl -s --user ${BBUSER}:${BBPASS} -X GET -H "Content-Type: application/json" https://api.bitbucket.org/2.0/repositories/fordodone/pipelines-test/pipelines_config/variables/ | jq -r '.values[]|select(.key=="DEPLOY_KEY_PASSPHRASE").uuid'`

if [ "${DEPLOY_KEY_PASSPHRASE_UUID}" == "" ]

then

# create bitbucket pipeline variable

echo

echo "DEPLOY_KEY_PASSPHRASE variable does not exist... creating DEPLOY_KEY_PASSPHRASE"

curl -s --user ${BBUSER}:${BBPASS} -X POST -H "Content-Type: application/json" -d '{"key":"DEPLOY_KEY_PASSPHRASE","value":"'"${DEPLOY_KEY_PASSPHRASE}"'","secured":"true"}' https://api.bitbucket.org/2.0/repositories/fordodone/pipelines-test/pipelines_config/variables/

echo

echo

else

# update existing bitbucket pipeline variable by uuid

# use --globoff to avoid curl interpreting curly braces in the variable uuid

echo

echo "DEPLOY_KEY_PASSPHRASE variable exists... updating DEPLOY_KEY_PASSPHRASE"

curl --globoff -s --user ${BBUSER}:${BBPASS} -X PUT -H "Content-Type: application/json" -d '{"key":"DEPLOY_KEY_PASSPHRASE","value":"'"${DEPLOY_KEY_PASSPHRASE}"'","secured":"true","uuid":"'"${DEPLOY_KEY_PASSPHRASE_UUID}"'"}' "https://api.bitbucket.org/2.0/repositories/fordodone/pipelines-test/pipelines_config/variables/${DEPLOY_KEY_PASSPHRASE_UUID}"

fi

# after passphrase is stored in bitbucket remove passphrase file

echo

echo "DEPLOY_KEY_PASSPHRASE successfully stored on bitbucket, removing passphrase file..."

rm passphrase.txt

echo

echo "KEY ROLL COMPLETE"

echo "add, commit, and push encypted private key and corresponding public key, update ssh targets with new public key"

echo " -> git add id_rsa_deploy.pem id_rsa_deploy.pub && git commit -m 'deploy ssh key roll' && git push"

echo

We use bitbucket username and password to authenticate the person running the script, they need access to insert the new ssh deploy key passphrase as a “secured” variable using the bitbucket API. The person running the script never sees the passphrase and doesn’t care what it is. This script can be run easily to update the key pair and passphrase. It’s easy and fast because when you need to roll a compromised key, you should never have to remember that damn openssl command that you have used your entire career, but somehow have never memorized.

Here’s how you could use the key in a Bitbucket Pipelines build container:

#!/bin/bash

set -e

# store passphrase from BitBucket secure variable into file

# file is on /dev/shm tmpfs in memory (don't put secrets on disk)

echo "creating passphrase file from BitBucket secure variable DEPLOY_KEY_PASSPHRASE"

echo -n "${DEPLOY_KEY_PASSPHRASE}" >/dev/shm/passphrase.txt

# use passphrase to decrypt ssh key into tmp file (again in memory backed file system)

echo "writing decrypted ssh key to tmp file"

openssl rsa -in id_rsa_deploy.pem -out /dev/shm/id_rsa_deploy.tmp -passin file:/dev/shm/passphrase.txt

chmod 600 /dev/shm/id_rsa_deploy.tmp

# invoke ssh-agent to manage keys

echo "starting ssh-agent"

eval `ssh-agent -s`

# add ssh key to ssh-agent

echo "adding key to ssh-agent"

ssh-add /dev/shm/id_rsa_deploy.tmp

# remove tmp ssh key and passphrase now that the key is in ssh-agent

echo "cleaning up decrypted key and passphrase file"

rm /dev/shm/id_rsa_deploy.tmp /dev/shm/passphrase.txt

# get ssh host key

echo "getting host keys"

ssh-keyscan -H someserver.fordodone.com >> $HOME/.ssh/known_hosts

# test the key

echo "testing key"

ssh someserver.fordodone.com "uptime"

It uses a tmpfs memory backed file system to store the key and passphrase, and ssh-agent to add the key to the session. How secure is secured enough? Whether you use the built-in Pipelines ssh deploy key, or this method to roll your own and store a passphrase in a variable, or store the ssh key as a base64 encoded blob in a variable, or however you do it, you essentially have to trust the provider to keep your secrets secret.

There are some changes you could make to all of this, but it’s good boilerplate. Other things to think about:

rewrite this in python and do automated key rolls once a day with Lambda, storing the dedicated bitbucket user/pass and git key in KMS.

do you really need ssh-agent?

you could turn off strict host key checking instead of using ssh-keyscan

could this be useful for x509 TLS certs?