Greenfield projects come along with the huge benefit of not having any existing or legacy code or infrastructure to navigate when trying to design an application. In some ways having a greenfield app land in your lap is the thing a developer’s dreams are made of. Along with the amazing opportunity that comes with a “start from scratch” project, comes a higher level of creative burden. The goals of the final product dictate the software architecture, and in turn the systems infrastructure, both of which have yet to be conceived.

Many times this question (or one similarly themed) arises:

“What database is right for my application?”

Often there is a clear and straightforward answer to the question, but in some cases a savvy software architect might wish to prototype against various types of persistent data stores.

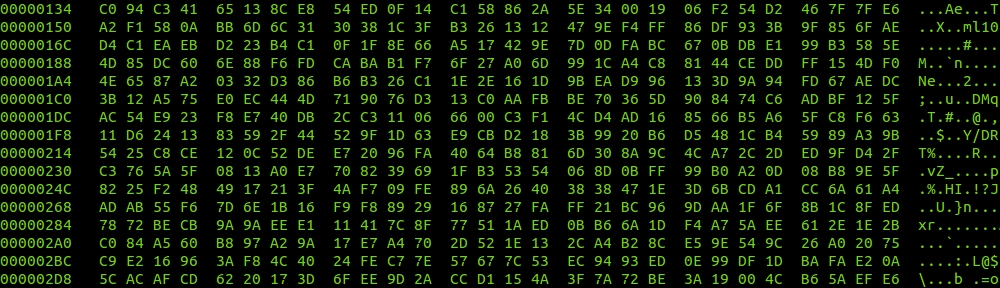

This docker-compose.yml has a node.js container and four data store containers to play around with: MySQL, PostgreSQL, DynamoDB, and MongoDB. They can be run simultaneously, or one at a time, making it perfect for testing these technologies locally during the beginnings of the application software architecture. The final version of your application infrastructure is still a long ways off, but at least it will be easy to test drive different solutions at the outset of the project.

version: '2'

services:

my-api-node:

container_name: my-api-node

image: node:latest

volumes:

- ./:/app/

ports:

- '3000:3000'

my-api-mysql:

container_name: my-api-mysql

image: mysql:5.7

#image: mysql:5.6

environment:

MYSQL_ROOT_PASSWORD: secretpassword

MYSQL_USER: my-api-node-local

MYSQL_PASSWORD: secretpassword

MYSQL_DATABASE: my_api_local

volumes:

- my-api-mysql-data:/var/lib/mysql/

ports:

- '3306:3306'

my-api-pgsql:

container_name: my-api-pgsql

image: postgres:9.6

environment:

POSTGRES_USER: my-api-node-local-pgsqltest

POSTGRES_PASSWORD: secretpassword

POSTGRES_DB: my_api_local_pgsqltest

volumes:

- my-api-pgsql-data:/var/lib/postgresql/data/

ports:

- '5432:5432'

my-api-dynamodb:

container_name: my-api-dynamodb

image: dwmkerr/dynamodb:latest

volumes:

- my-api-dynamodb-data:/data

command: -sharedDb

ports:

- '8000:8000'

my-api-mongo:

container_name: my-api-mongo

image: mongo:3.4

volumes:

- my-api-mongo-data:/data/db

ports:

- '27017:27017'

volumes:

my-api-mysql-data:

my-api-pgsql-data:

my-api-dynamodb-data:

my-api-mongo-data:I love Docker. I use Docker a lot. And like any tool, you can do really stupid things with it. A great piece of advice comes to mind when writing a docker-compose project like this one:

“Just because you can, doesn’t mean you should.”

This statement elicits strong emotions from both halves of a syadmin brain. The first shudders at the painful thought of running multiple databases for an application (local or otherwise), and the other shouts “Hold my beer!” Which half will you listen to today?