I once had a migration project to move 40TB of data that needed to be moved from source to destination NFS volumes. Naturally, I went with rsync. This was basically the command for the initial transfers:

rsync -a --out-format="transfer:%t,%b,%f" --itemize-changes /mnt/srcvol /mnt/destvol >> /log/file.log

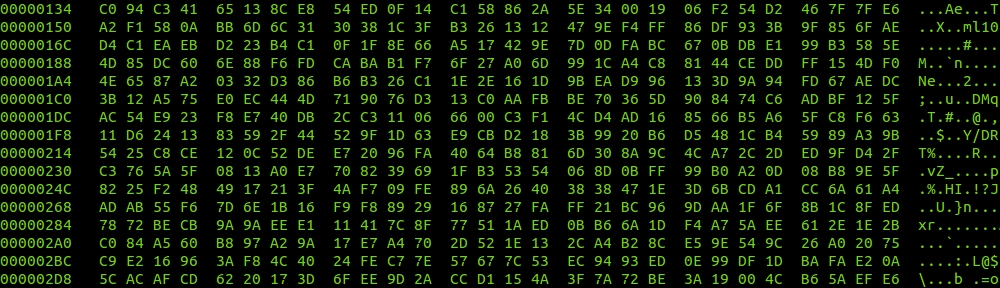

Pretty simple right? The logs are a simple csv file that looked like this:

transfer:2013/05/02 10:16:13,35291,mnt/srcvol/archive/foo/bar/barfoo/IMAGES/1256562131100.jpg

The customer asked for daily updates on progress. I said no problem, and this one liner takes care of it:

# grep transfer /log/file.log | awk -F "," '{if ($2!=0) i=i+$2; x++} END {print "total Gbytes: "i/1073741824"\ntotal files: "x}'

total Gbytes: 1153.29

total files: 123686

From the rsync command above, the %t means timestamp (2013/05/02 10:16:13), the %b means bytes transferred (35291), and the %f means the whole file path. By adding up the %b column of output and counting how many times you added it, you get both the total bytes transferred and the total number of files transferred. Directories show up as 0 byte transfers so in awk we don’t count them. Also, I threw in the divide by 1073741824 (1024*1024*1024), which converts bytes to Gebibytes.

I ended up putting it in a shell script and adding options such as, just find transfers for a particular day/hour, better handling for the Gbytes number, rate calculation, and the ability to add logs from multiple data moving servers.